AI in PoSH: What Use Is a Machine to the People Who Sit, Listen, and Decide?

There is a quiet debate inside every Internal Committee. It doesn’t happen in official meetings. It happens in the moments around them, in the glance exchanged after a witness leaves, in the pause between two hearings, in the silence that follows reading a difficult complaint. And this debate is only getting louder as caseloads rise….

There is a quiet debate inside every Internal Committee. It doesn’t happen in official meetings. It happens in the moments around them, in the glance exchanged after a witness leaves, in the pause between two hearings, in the silence that follows reading a difficult complaint. And this debate is only getting louder as caseloads rise. Sexual harassment complaints across listed companies increased to 2,777 in FY24, up from 2,026 in FY23 and 1,313 in FY22, a sharp rise that reflects growing awareness, but also places unprecedented procedural pressure on ICs.

In this reality, one question repeats itself: “What use is AI to us, the people who sit across humans, listen to their stories, and decide what is fair?”

PoSH inquiries are not process flows. They are human interactions shaped by fear, contradiction, hesitation, memory, emotion, and power. So what role can a machine possibly play here? The answer is not dramatic.It is grounded in the reality of what the IC actually holds and what it shouldn’t have to hold.

The Work AI Cannot Touch — The Human Centre of PoSH

The IC’s core work is human. No machine belongs in that space.

AI cannot interpret why someone’s voice trembled on a specific sentence. It cannot understand the cultural reasons a complainant waited months to report. It cannot notice the respondent’s discomfort when a particular incident is mentioned. Neither can sense the tension between two colleagues or the exhaustion in someone’s eyes.

These are not datapoints. They are signals of lived experience. PoSH inquiries rely on empathy, neutrality, and contextual judgment, qualities no algorithm has, and no ethical system should pretend it has.

This is the essence of human governance.

The Work AI Should Take — The Parts That Drain the IC

There’s another side to the work of an Internal Committee, the side that’s essential, yet exhausting. It’s the hours spent untangling scattered emails to build a coherent timeline, cleaning language to remove bias in drafts, rewriting notices to ensure consistency in tone and structure, and summarising lengthy submissions into something usable. It’s the careful cross-checking of statements for contradictions, the revisiting of Sections 11 and 12 to confirm timelines, the meticulous formatting of minutes so they remain clear and defensible, and the thankless task of updating registers that should have updated themselves.

And the courts have made it unambiguously clear that this “administrative side” of PoSH is not optional. In Arti Devi v. Jawaharlal Nehru University (W.P.(C) 9407/2019), the Delhi High Court asked the JNU Registrar to produce the full case record, the complaint, the IC’s recommendations, and the evidence of how those recommendations were processed. The inability to produce complete documentation became a point of judicial concern.

None of this work calls for empathy, but all of it demands time and absolute precision. This is the space where AI can help, not because it is smart, but because it is tireless. In PoSH, the purpose of AI is not to interpret; it is to shoulder the weight of procedure so that humans can carry the responsibility of judgment.

The Real Fear IC Members Have — And Why It Matters

Most Internal Committees are not afraid of technology; they are afraid of interference. An uncontrolled AI model can easily assume that emotional text is factual, over-simplify nuance, connect details that are unrelated, and sound confident even when it is wrong. It may respond in situations where silence is safer or mimic authority that it does not truly have. These fears are not misplaced, PoSH does not allow room for guesswork, whether from humans or machines. This is precisely why AI governance matters: to ensure that AI understands not what it can do, but what it must never do.

AI Governance: Boundaries That Protect Fairness

The guardrails in Conduct’s PoSHGPT are built on a single principle: AI must never step into the human part of a PoSH inquiry. It cannot decide what is true, interpret intention, classify experiences, judge behaviour, infer meaning, advise on outcomes, or assess credibility. These functions belong only to people, to the members who bring context, empathy, and accountability to every case.

These boundaries are not limitations; they are safeguards. They protect both the tool and the process. When an AI system recognises where its role ends, it becomes a reliable partner , one that can handle process without crossing into decision. But the moment it forgets that boundary, when it tries to sound certain or imply understanding, it stops being an aid and becomes a risk. AI governance, in this sense, isn’t about control, it’s about discipline. It ensures technology serves inquiry, not replaces it.

Human Governance: The Authority That Cannot Be Replaced

PoSH inquiries are built to keep humans at the centre. Human governance means that people listen, question, read emotion, evaluate contradictions, choose what is fair, and hold accountability. These are decisions that no algorithm can replicate because they require empathy, context, and conscience.

An AI model cannot be responsible for the outcome of a hearing. But it can ensure that the Internal Committee never misses a procedural step, a legal deadline, or a structural detail that might weaken the inquiry. AI protects the process; humans protect the people. And together, they protect the integrity of justice.

The Real Answer to the Debate

When IC members ask, “What use is AI to us?” the answer isn’t technical, it’s practical. AI is most valuable in the spaces where the IC’s emotional energy should never have to go: administration, structure, organisation, clarity, documentation discipline, legal phrasing, and procedural checks.

The IC shouldn’t be drained by formatting annexures when their energy is needed to listen deeply. They shouldn’t spend hours fixing registers when their focus should be on understanding testimonies and assessing fairness. AI exists to carry the procedural burden so that humans can carry the moral one.

This isn’t innovation for its own sake. It’s respect, for the complainant, the respondent, and for the committee that bears the weight of judgment.

The Future of PoSH and AI is Not Replacement — It Is Partnership

PoSH inquiries will always be human-first but they don’t have to be human-only. In a system where every delay, missing note, or inconsistent record can weaken a case, ICs need support that is accurate, quiet, and reliable.

That’s where AI helps not by deciding, interpreting, or replacing human judgment, but by strengthening the spine of the process. AI that stays modest, supervised, and firmly within boundaries can remove administrative strain, reduce procedural risk, and give IC members the space to stay fully present, empathetic, and unbiased.

AI should never sit in the IC’s chair. But it can sit beside the IC, steady, organised, and invisible in the background.

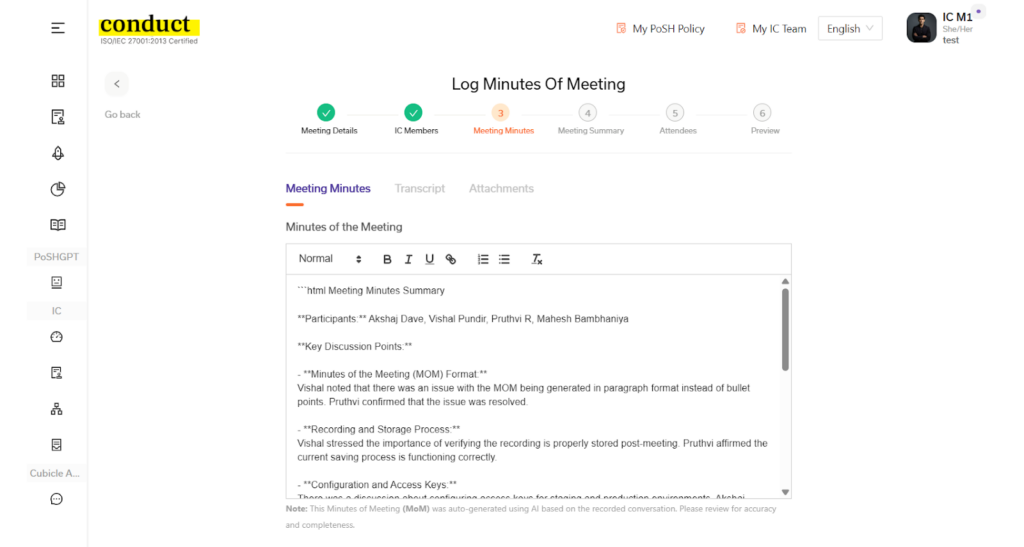

Conduct is built on exactly this principle: AI that knows its limits. It records meetings, generates transcripts and minutes, tracks timelines, and organises evidence without ever crossing into interpretation or decision-making. The result is a PoSH process that remains deeply human in its judgment, and digitally precise in its documentation, making every inquiry stronger, safer, and more defensible.

View Conduct’s Case Management supporting ICs→

Key takeaways

- AI has value in PoSH only when it carries the procedural load, not when it attempts to read, interpret, or decide human experiences.

- Human governance remains the centre of PoSH inquiries, while AI governance ensures that technology stays within strict, ethical boundaries.

- The partnership between AI and the IC works best when AI protects process and humans protect people, creating a fairer and more defensible inquiry.