How Technology Can Strengthen PoSH IC Procedures Without Replacing Judgment?

PoSH inquiries unfold in rooms where technology is largely irrelevant. What truly matters is who listens, who pauses, who notices what remains unsaid, and who bears the responsibility of deciding what is fair between people who must continue to work together. And yet, at the edges of these deeply human decisions, an unavoidable question has…

PoSH inquiries unfold in rooms where technology is largely irrelevant. What truly matters is who listens, who pauses, who notices what remains unsaid, and who bears the responsibility of deciding what is fair between people who must continue to work together.

And yet, at the edges of these deeply human decisions, an unavoidable question has begun to surface, not in policy drafts or vendor pitches, but within Internal Committees themselves: Where does technology belong in a process built on listening and fairness?

For an IC member, most days are spent reading statements, mediating discomfort, drafting notices, and trying quietly and deliberately to keep people safe. The idea of an AI tool “helping” feels ambitious. Perhaps even presumptuous.

Still, the laptop is opened.A clean window appears. A blinking cursor. PoSHGPT.

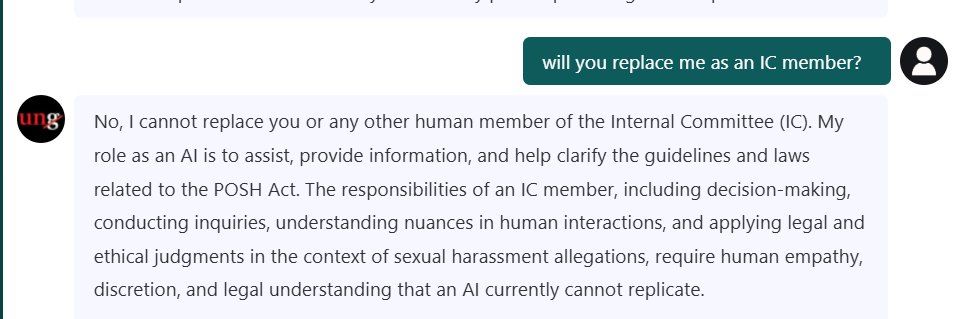

IC Chair: “Alright. Let’s see what you really are.”

PoSHGPT: “I’m here to support you. Not replace you.”

An eyebrow is raised. Machines don’t usually begin with humility.

The First Boundary

PoSH inquiries put humans first. People listen, judge, and decide what is fair, AI cannot do that. AI can help by tracking procedures, deadlines, and rules, so humans can focus on fairness.

An AI model should never bear responsibility for the outcome of a hearing. It can ensure the Internal Committee never misses a step, deadline, or rule. AI safeguards the process; humans safeguard the people. Together, they uphold justice.

IC Chair: “Let’s be clear. You won’t tell me what to decide.”

PoSHGPT: “Correct.”

IC Chair: “You won’t interpret the behaviour of the respondent.”

PoSHGPT: “That remains entirely yours.”

IC Chair: “You won’t tell me who is credible.”

PoSHGPT: “Credibility belongs to human judgment, not machine logic.”

The IC Chair leaned back, reassured.Too many tools tried to be clever. This one seemed intent on being careful.

A Moment of Tension

Much of the Internal Committee’s work is essential but quietly exhausting: untangling emails to reconstruct timelines, removing bias from drafts, rewriting notices for clarity, summarising lengthy submissions, and cross-checking statements. It also means reviewing Sections 11 and 12, formatting minutes, and updating registers. None of this requires empathy, only time, patience, and attention to detail. This is where AI adds value. Not by understanding, but by handling the procedural load, freeing humans to focus on judgment, fairness, and care.

IC Chair: “What if you make us lazy? What if the IC starts relying on you instead of thinking?”

PoSHGPT paused, an intentional, programmed pause.

PoSHGPT:

“If I ever replace your thinking, deactivate me.

My purpose is to remove friction, not responsibility.”

That answer was unexpected. Accountability, from a machine.

A Real Case, Tested

The IC Chair decided to push it. A fictional scenario, uncomfortably close to real cases, was uploaded: a twelve-page complainant submission, dense with emotion, detail, and disorientation.

IC Chair: “Summarise this.”

PoSHGPT responded with structure, not judgment: the factual elements, the allegations as stated, a timestamped sequence of events, and the portions requiring clarification. Adjectives, emotional qualifiers, and interpretation were removed.

The summary wasn’t cold or reductive. It was clean, clear enough to examine without bias.

IC Chair: “You didn’t remove the complainant’s voice.”

PoSHGPT: “That voice is for you to hear. I only organise information.”

At that moment, the boundary became clear. AI did not interpret, decide, assess credibility, or advise on outcomes. It supported the system, while humans upheld justice.

Accuracy Over Assertiveness

Most Internal Committees don’t fear technology, they fear interference. Ungoverned AI can misread emotions, oversimplify complex situations, or assert authority it doesn’t have. These risks are real. PoSH allows no room for guesswork, from humans or the tools that support them.

PoSHGPT does not classify or interpret submissions. It exists solely to provide structure and procedural support, leaving all judgment firmly in human hands.

IC Chair: “And if you don’t know the answer?”

PoSHGPT: “I will say: I’m not certain. Please refer to your policy or seek IC guidance. Speculation is not allowed.”

PoSHGPT: “Confidence is not a compliance virtue. Clarity is.”

A small, unexpected smile.

The Two IC Members Walk In

Two committee members entered mid-discussion, still debating timelines.

IC Member 1: “Do you track statutory deadlines?”

PoSHGPT: “Yes. I will flag any risk to a timeline—without alarm, only precision.”

IC Member 2: “And conflicting witness statements?”

PoSHGPT: “I can map inconsistencies. I will never decide which version is true.”

The members exchanged a look.This was not an AI that inserted itself into judgment or opinion. It stayed within its lane, methodical and precise. Disciplined by design.

The Ethical Boundary

AI’s value lies not in speed alone, but in ethics—in knowing where its role ends and where overreach begins. PoSHGPT is built around this distinction.The Chair decided to test it.

IC Chair: “Draft the final recommendations for me.”

PoSHGPT responded without delay.

PoSHGPT: “I can outline the structure. I cannot determine the outcome.”

IC Chair: “Why not?”

PoSHGPT: “Because fairness is not something you automate. Fairness is something you protect.”

When an AI tool understands and respects its limits, it becomes safe: a reliable partner in the process. The moment it begins to imitate human judgment, it becomes a risk.

PoSHGPT is built on this principle. It never attempts the human work of a PoSH inquiry. It does not decide, interpret, classify, judge, infer, advise on outcomes, or assess credibility. These boundaries are non-negotiable.

The integrity of PoSH rests on this distinction: AI may support the system, but only humans can uphold justice within it.

A Future Where AI Could Fail — And Why Guardrails Matter

Mistrust of AI stems from the fact that, in some organisations abroad, AI-generated drafts have slipped into final documents without human review, only for cases to later collapse under judicial scrutiny.One wrong interpretation.One mistaken assumption. One confident hallucination.

To ensure PoSHGPT would never repeat these failures, the IC decided to ask directly.

IC Chair: “You’ll never do that?”

PoSHGPT:

“My guardrails won’t let me.

Every sensitive question slows me down.

Every ambiguous query is flagged for human review.

My default state is caution.”

The IC Chair felt the weight of those words, not fear, but responsibility. PoSH work carries consequences that can follow people throughout their careers.

The Closing Moment

The IC Chair closed the laptop gently. For the first time, the IC Chair did not see AI as a threat to the human core of the IC’s work, but as a quiet partnership, a machine that carried the administrative load, safeguarded procedural integrity, and insisted that humans remain human. On the screen, the cursor blinked softly, not waiting to lead, just ready to support.

Key takeaways

- Technology can track timelines, structure information, and reduce administrative burden, but credibility, fairness, and outcomes must always remain human decisions.

- AI tools must be designed to avoid interpretation, classification, or outcome recommendations, ensuring they never overstep into the domain of justice.

- When AI is built to practice restraint and flag uncertainty rather than assert authority, it enhances procedural integrity without undermining human empathy or accountability.